Currently Empty: ₹0.00

Asymptotic Notations: Big Oh, Big Theta and Big Omega Notation

- The Three Primary Asymptotic Notations

- 1. Big-O Notation (O) – Upper Bound / Worst Case

- Step-by-Step Big-O Analysis

- Common Complexity Classes

- 2. Big-Omega Notation (Ω) – Lower Bound / Best Case

- Important Clarifications About Ω Notation

- 3. Big-Theta Notation (Θ) – Tight Bound / Exact Growth Rate

- Key Points About Θ Notation

- Summary: When to Use Each Notation

Asymptotic Notations are mathematical tools used to characterize the efficiency of algorithms by describing how their resource requirements (time and memory) grow as the input size increases toward infinity. They provide a machine-independent way to compare algorithms by focusing on growth rates while ignoring constant factors and lower-order terms.

If we think of an algorithm’s performance as a function f(n) where n represents the input size, asymptotic notations allow us to classify f(n) into broad categories like linear, quadratic, or logarithmic. This abstraction helps us understand algorithmic scalability without getting bogged down in implementation-specific details.

The Three Primary Asymptotic Notations

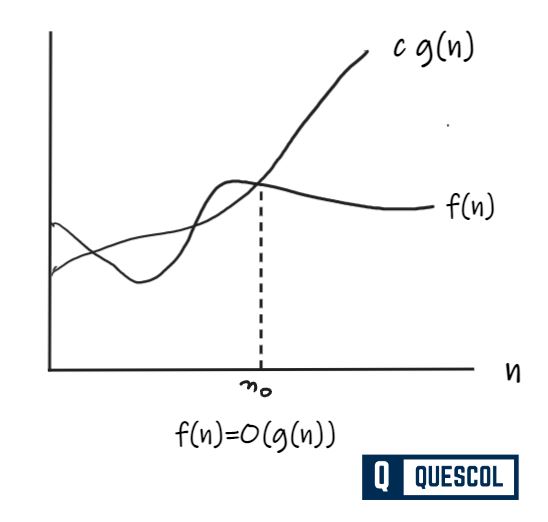

1. Big-O Notation (O) – Upper Bound / Worst Case

Big-O Notation is commonly denoted by O, is an Asymptotic Notation for the worst-case analysis of an algorithm. Big-O notation describes the worst-case performance of an algorithm by defining an upper bound on its growth rate. It answers: “What is the maximum number of operations this algorithm might perform for an input of size n?”

Formal Definition: A function f(n) is O(g(n)) if there exist positive constants c and n₀ such that:

0 ≤ f(n) ≤ c·g(n) for all n ≥ n₀.

We say “f(n) is Big-O of g(n)” or “f(n) grows no faster than g(n).”

Example – In Insertion Sort:

- Best case: O(n) – when the input array is already sorted

- Worst case: O(n²) – when the input array is reverse-sorted

- Average case: O(n²) – for randomly ordered input

Since algorithm analysis typically focuses on worst-case performance guarantees, we say Insertion Sort is an O(n²) algorithm.

Step-by-Step Big-O Analysis

- Identify the input size parameter

n. - Express the algorithm’s maximum number of basic operations in terms of

n. - Focus on the fastest-growing term as

n → ∞. - Drop all constant factors and lower-order terms.

Why Constants Are Ignored: The constant c in the definition absorbs machine-dependent factors like programming language, compiler optimizations, processor speed, and memory architecture. By ignoring these, Big-O captures the algorithm’s intrinsic efficiency.

Proof Example

Show that f(n) = n² + n is O(n³).

For n ≥ 1: n² ≤ n³ and n ≤ n³.

Thus: n² + n ≤ n³ + n³ = 2n³ for all n ≥ 1.

Therefore, with c = 2 and n₀ = 1, n² + n = O(n³).

| Function f(n) | Big-O Classification | Common Name |

|---|---|---|

| 10, 100, 1000 | O(1) | Constant Time |

| 3n + 5, 2n – 10 | O(n) | Linear Time |

| 3n² + 5n + 7 | O(n²) | Quadratic Time |

| 2n³ + 3n² + 5n – 10 | O(n³) | Cubic Time |

| 5 log n + 10 | O(log n) | Logarithmic Time |

Common Complexity Classes

- O(1): Constant time – execution time doesn’t depend on input size

- O(log n): Logarithmic time – execution grows slowly as input increases (e.g., binary search)

- O(n): Linear time – execution time proportional to input size

- O(n log n): Linearithmic time – efficient sorting algorithms (Merge Sort, QuickSort average case)

- O(n²): Quadratic time – nested loops over input (Bubble Sort, Selection Sort, Insertion Sort)

- O(2ⁿ): Exponential time – brute-force solutions, impractical for large inputs

2. Big-Omega Notation (Ω) – Lower Bound / Best Case

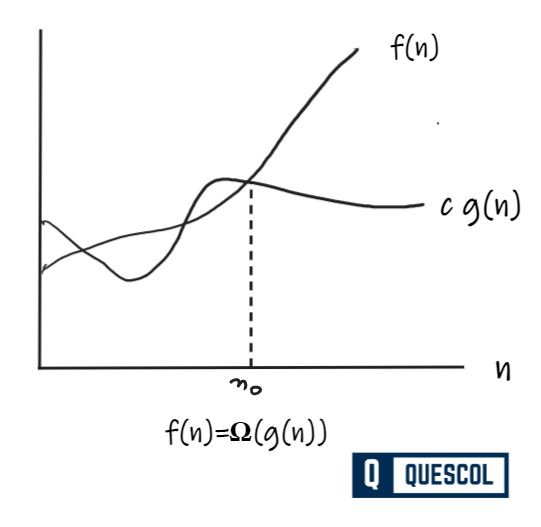

Big-Omega notation describes the best-case performance or a fundamental lower bound of an algorithm. It answers: “What is the minimum number of operations this algorithm must perform, even under ideal conditions?”

Formal Definition: A function f(n) is Ω(g(n)) if there exist positive constants c and n₀ such that:

0 ≤ c·g(n) ≤ f(n) for all n ≥ n₀.

We say “f(n) is Big-Omega of g(n)” or “f(n) grows no slower than g(n).”

Example: Any comparison-based sorting algorithm requires at least Ω(n log n) comparisons in the worst case. This is a fundamental lower bound for the sorting problem.

Proof Example: Show that f(n) = n³ + 4n² is Ω(n²).

For n ≥ 1: n³ ≤ n³ + 4n² and n² ≤ n³.

Thus: n² ≤ n³ ≤ n³ + 4n² for all n ≥ 1.

Therefore: 1·n² ≤ n³ + 4n² for all n ≥ 1.

With c = 1 and n₀ = 1, n³ + 4n² = Ω(n²).

Important Clarifications About Ω Notation

- Best-case Ω refers to a specific algorithm’s optimal performance (e.g., Insertion Sort is Ω(n)).

- Fundamental lower bound Ω refers to the minimum operations required by any algorithm solving that problem (e.g., comparison sorting is Ω(n log n)).

- When we simply write Ω for an algorithm without qualification, it typically refers to its best-case performance.

- Ω can also describe worst-case lower bounds (e.g., “this algorithm is Ω(n²) in the worst case”).

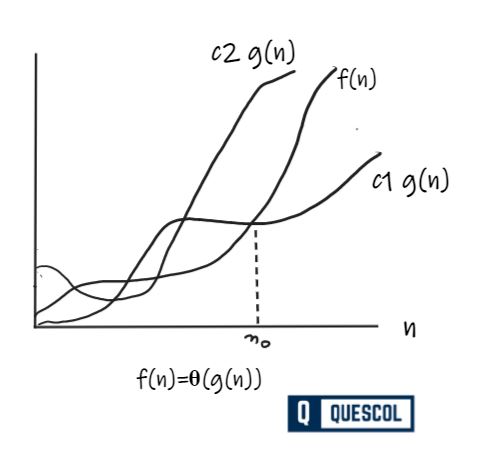

3. Big-Theta Notation (Θ) – Tight Bound / Exact Growth Rate

Big-Theta notation describes an algorithm’s exact asymptotic growth rate when its upper and lower bounds coincide (within constant factors). It provides the most precise characterization of an algorithm’s efficiency.

Formal Definition: A function f(n) is Θ(g(n)) if there exist positive constants c₁, c₂, and n₀ such that:

0 ≤ c₁·g(n) ≤ f(n) ≤ c₂·g(n) for all n ≥ n₀.

This means f(n) = O(g(n)) AND f(n) = Ω(g(n)).

Example – Merge Sort: Has time complexity Θ(n log n). This means:

- It is O(n log n): never takes more than constant × n log n operations

- It is Ω(n log n): always requires at least constant × n log n operations

Thus its growth rate is precisely proportional to n log n.

Proof Example: Show that f(n) = n² + 5n + 7 is Θ(n²).

For n ≥ 1:

- Upper bound:

n² + 5n + 7 ≤ n² + 5n² + 7n² = 13n² - Lower bound:

n² ≤ n² + 5n + 7(all terms are non-negative forn ≥ 0)

Thus for n ≥ 1: 1·n² ≤ n² + 5n + 7 ≤ 13·n².

With c₁ = 1, c₂ = 13, and n₀ = 1, n² + 5n + 7 = Θ(n²).

Key Points About Θ Notation

- Θ describes tight bounds, not specifically average case (though for many algorithms, average case equals worst case).

- When an algorithm’s best-case and worst-case complexities have the same growth rate (e.g., Merge Sort is Θ(n log n) in all cases), Θ perfectly describes its performance.

- If we simply write Θ for an algorithm, it typically describes its worst-case tight bound.

- Θ is the most informative notation when available, as it gives both upper and lower bounds.

Summary: When to Use Each Notation

| Notation | Purpose | Analogy | Common Use |

|---|---|---|---|

| Big-O (O) | Upper bound / Worst-case guarantee “Takes at most this time” | “The commute takes at most 1 hour” | Algorithm analysis, performance guarantees |

| Big-Omega (Ω) | Lower bound / Best-case or fundamental limit “Takes at least this time” | “The commute takes at least 20 minutes” | Optimal performance, problem complexity lower bounds |

| Big-Theta (Θ) | Tight bound / Exact growth rate “Takes proportional to this time” | “The commute takes about 45 minutes” | Precise characterization when bounds match |

In practice, Big-O is most commonly used because it provides the guarantee we care about most: how bad can performance get? Big-Theta is preferred when we can establish matching upper and lower bounds. Big-Omega helps us understand optimal performance or prove that no algorithm can solve a problem faster than a certain rate.