Currently Empty: ₹0.00

- Why CPU Scheduling is Needed?

- Purposes of CPU Scheduling:

- Types of CPU Scheduling Algorithm

- 1. Preemptive Scheduling

- (a) Shortest Remaining Time First (SRTF)

- (b) Round Robin (RR)

- (c) Priority Scheduling (Preemptive)

- (d) Multilevel Queue Scheduling

- (e) Multilevel Feedback Queue Scheduling

- 2. Non-Preemptive Scheduling

- (a) First-Come, First-Served (FCFS)

- (b) Shortest Job First (SJF – Non-preemptive)

- (c) Priority Scheduling (Non-preemptive)

- (d) Highest Response Ratio Next (HRRN)

- FAQs

- Q1. Which CPU scheduling algorithm is best for time-sharing systems?

- Q2. Which algorithm gives minimum average waiting time?

- Q3. How does aging solve starvation in priority scheduling?

- Q4. What makes MLFQ better than MLQ?

- Q5. Is Lottery Scheduling used in real OS?

- Conclusion

CPU scheduling is the method used by the operating system to decide which process gets CPU time when multiple processes are in the ready queue. Since the CPU can execute only one process at a time, efficient scheduling ensures maximum CPU utilization, minimal waiting time, and smooth multitasking

Why CPU Scheduling is Needed?

In a multiprogramming environment, if the OS does not schedule tasks effectively:

- The CPU may stay idle, especially when processes are I/O-bound.

- System performance drops due to poor resource utilization.

Purposes of CPU Scheduling:

- Maximize CPU utilization.

- Minimize response time and waiting time.

- increase throughput (number of processes completed per time unit).

- Enhance system responsiveness for users.

Key Point: CPU scheduling ensures that when the CPU is free, the OS selects a process from the ready queue to keep the system active and efficient.

Read: Various CPU Scheduling Terminologies

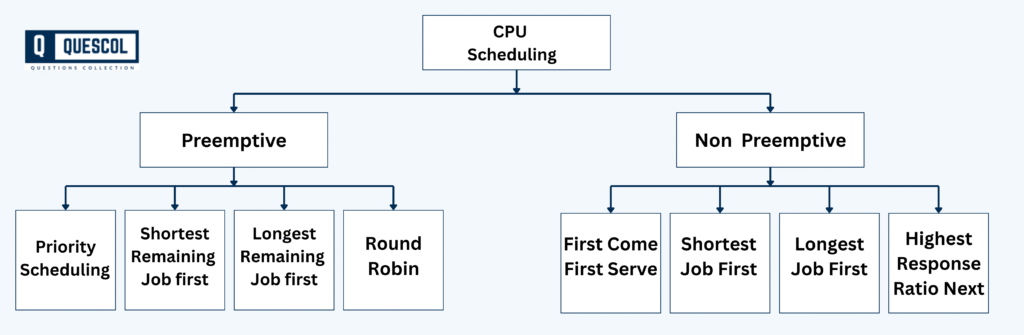

Types of CPU Scheduling Algorithm

CPU scheduling algorithm are generally classified first into two broad categories:

1. Preemptive Scheduling

In preemptive scheduling, a running process can be interrupted and moved back to the ready queue if a higher-priority process arrives or the time quantum expires. This method improves system responsiveness and ensures fair CPU sharing. Preemptive scheduling ensures better responsiveness and fairness, especially in interactive systems, but it can also increase overhead due to frequent context switching.

Examples of Preemptive Scheduling:

- Shortest Remaining Time First (SRTF) → preemptive version of SJF

- Round Robin (RR)

- Priority Scheduling (when implemented as preemptive)

- Multilevel Queue (preemptive version)

- Multilevel Feedback Queue

Below are the detailed explanations of each Preemptive Scheduling algorithms

(a) Shortest Remaining Time First (SRTF)

This is the preemptive version of Shortest Job First (SJF). In this method, the scheduler always selects the process that has the least remaining burst time. If a new process arrives with a shorter burst time than the currently running process, the CPU is preempted and assigned to the new process. This ensures minimum average waiting time. However, it can lead to starvation of longer processes, as they may keep getting delayed if shorter jobs continue arriving.

- Idea: Preemptive version of SJF; process with least remaining burst time executes first.

- Advantages: Minimizes average waiting time.

- Disadvantages: Longer processes may starve.

(b) Round Robin (RR)

Round Robin is one of the most popular CPU scheduling methods for time-sharing systems. Each process is assigned a fixed time quantum (time slice) and processes are executed in a cyclic order. If a process does not finish within its allocated quantum, it is preempted and placed at the back of the queue, and the CPU is given to the next process. This method is fair, as every process gets equal CPU time. However, its performance depends heavily on the size of the time quantum — too small a quantum causes frequent context switches, while too large a quantum makes it behave like FCFS.

- Idea: Each process gets a fixed time quantum in cyclic order.

- Advantages: Fair for all processes, ideal for time-sharing.

- Disadvantages: Performance depends on time quantum size.

(c) Priority Scheduling (Preemptive)

In preemptive priority scheduling, each process is assigned a priority value. The CPU is allocated to the process with the highest priority, but if a new process arrives with a higher priority, it immediately preempts the current process. This ensures that urgent or time-critical tasks are executed without delay. The problem, however, is starvation — lower-priority processes may keep waiting indefinitely if higher-priority processes continue to arrive.

- Idea: CPU goes to the process with the highest priority.

- Advantages: Useful in systems where certain tasks are more important than others; ensures urgent tasks run quickly.

- Disadvantages: Lower-priority processes may suffer from starvation if higher-priority tasks keep arriving.

(d) Multilevel Queue Scheduling

In this method, processes are divided into multiple queues based on their type (for example, system processes, interactive processes, and batch processes). Each queue has its own scheduling policy (e.g., Round Robin for interactive processes and FCFS for batch jobs). CPU time is allocated among the queues based on predefined priorities. While this method is effective in handling different types of processes, it is rigid — once a process is placed in a queue, it cannot move to another queue, which may lead to starvation of lower-priority queues.

- Idea: Processes are divided into multiple queues, each with its own policy.

- Advantages: Good at handling different process types.

- Disadvantages: Rigid; may cause starvation of lower-priority queues.

(e) Multilevel Feedback Queue Scheduling

This algorithm is an improved version of multilevel queue scheduling. The key difference is that processes are allowed to move between queues based on their behavior and requirements. For example, if a process uses too much CPU time, it may be moved to a lower-priority queue, while processes waiting too long can be promoted to a higher-priority queue. This flexibility helps in reducing starvation and balancing workloads. However, it is more complex to design and implement, as it requires careful tuning of parameters like the number of queues, time quantum for each queue, and promotion/demotion rules.

- Idea: Similar to multilevel queue, but processes can move between queues.

- Advantages: Flexible, reduces starvation by promoting/demoting processes.

- Disadvantages: Complex to design and implement.

2. Non-Preemptive Scheduling

In non-preemptive scheduling, once a process starts execution on the CPU, it cannot be interrupted until it finishes its burst time or voluntarily releases the CPU. This type of scheduling is simple to implement and ensures that a process completes without unexpected interruptions. However, it is less responsive because high-priority processes may have to wait for a lower-priority or longer process to finish.

Examples of Non-Preemptive Scheduling:

- First-Come, First-Served (FCFS)

- Shortest Job First (SJF – non-preemptive version)

- Priority Scheduling (when implemented as non-preemptive)

- Highest Response Ratio Next (HRRN)

Below are the detailed explanations of each Non-Preemptive Scheduling algorithms

(a) First-Come, First-Served (FCFS)

In this method, processes are executed in the order they arrive in the ready queue. It works much like a queue in a supermarket where the customer who arrives first is served first. Since it is non-preemptive, once a process starts, it runs until completion without interruption.

- Idea: Processes are executed in the order they arrive.

- Advantages: Simple to implement and fair for processes that arrive earlier.

- Disadvantages: Can cause the convoy effect, where a long process delays all the shorter ones waiting behind it.

(b) Shortest Job First (SJF – Non-preemptive)

This algorithm selects the process with the shortest burst time and executes it first. The goal is to minimize average waiting time by always handling the quickest tasks before the longer ones. While very efficient, the limitation is that the operating system must know or estimate the burst time in advance, which is often difficult in real scenarios.

- Idea: The process with the smallest burst time executes first.

- Advantages: Produces the minimum average waiting time compared to other methods.

- Disadvantages: Requires advance knowledge of burst times and may lead to starvation for longer processes if short jobs keep arriving.

(c) Priority Scheduling (Non-preemptive)

In this method, each process is assigned a priority value, and the CPU is allocated to the process with the highest priority. If two processes have the same priority, the one that arrived first will execute. This ensures that critical or time-sensitive tasks are given preference over less important ones.

- Idea: CPU goes to the process with the highest priority.

- Advantages: Useful in systems where certain tasks are more important than others.

- Disadvantages: Lower-priority processes may suffer from starvation if higher-priority tasks keep arriving.

(d) Highest Response Ratio Next (HRRN)

This algorithm is designed to overcome the starvation problem of SJF and Priority scheduling. It chooses the process with the highest response ratio, which is calculated as:

Response Ratio = (Waiting Time + Burst Time) ÷ Burst Time

By considering both waiting time and burst time, HRRN ensures a balance between short processes and long processes. A process that has been waiting for a long time gets a higher response ratio, reducing the chances of starvation.

- Idea: Process with the highest response ratio executes first.

- Formula: Response Ratio = (Waiting Time + Burst Time) ÷ Burst Time

- Advantages: Reduces starvation and provides fairness.

- Disadvantages: Slightly more complex to calculate compared to FCFS or SJF.

FAQs

Q1. Which CPU scheduling algorithm is best for time-sharing systems?

Round Robin — because it ensures fair CPU time among all users.

Q2. Which algorithm gives minimum average waiting time?

SJF (Shortest Job First) — but only if burst times are known in advance.

Q3. How does aging solve starvation in priority scheduling?

By gradually increasing the priority of waiting processes.

Q4. What makes MLFQ better than MLQ?

Flexibility — MLFQ allows movement between queues based on behavior and aging.

Q5. Is Lottery Scheduling used in real OS?

Yes, in some experimental or research OS like Exokernel and Stride Scheduling variations.

Conclusion

CPU scheduling is essential for efficient multitasking and resource management in operating systems. It ensures fair and optimized allocation of CPU time among processes, improving system responsiveness, throughput, and CPU utilization. By understanding key terminologies and choosing suitable algorithms, operating systems can balance performance, responsiveness, and fairness, especially in multiprogramming and real-time environments.